Supercomputers – Complete Resources 2016 – have long been renowned for their processing power and their handling of data. Because of those qualities they have been employed for many major activities such as weather forecasting and other similar tasks. 20 years ago, the European Center for Medium-Range Weather Forecasting possessed the 27th most powerful supercomputer in the world. That computer had 116 cores and could process over 200 gigaflops of data. Nowadays, the same center has the 27th and 28th best supercomputers in the world which have the capability of handling 20 times the data of the supercomputer they had only two decades earlier. This remarkable change has had a major effect in hurricane forecasting and their models.

Retired Navy Rear Adm. Timothy Gallaudet, Ph.D. acting NOAA administrator says “NOAA’s supercomputers ingest and analyze billions of data points taken from satellites, weather balloons, airplanes, buoys and ground observing stations around the world each day. Having more computing speed and capacity positions us to collect and process even more data from our newest satellites — GOES-East, NOAA-20 and GOES-S — to meet the growing information and decision-support needs of our emergency management partners, the weather industry and the public.”

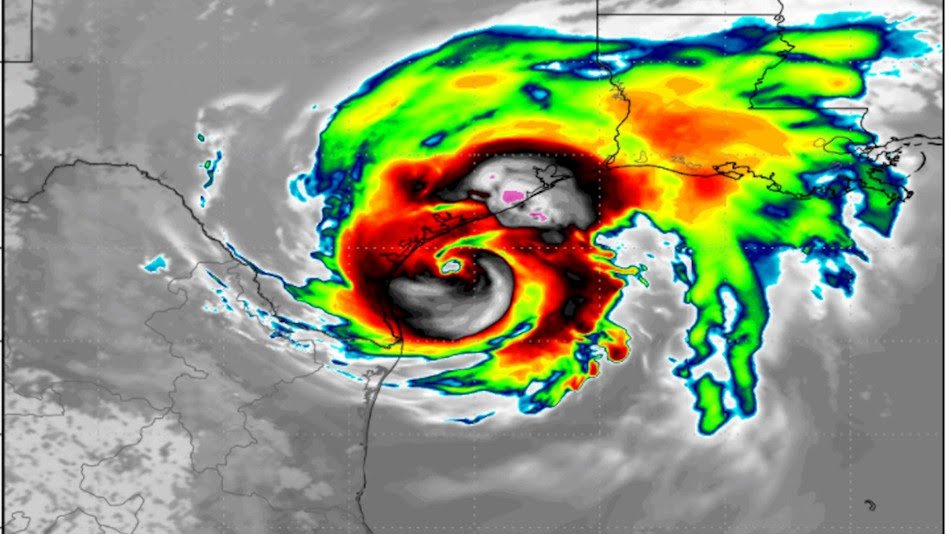

There have been remarkable advancements in both America and Europe when it comes to the tools required for the job. According to a report, hurricane tracking nowadays sees an error of 155 nautical miles over five-day tracking. This means that the predicted area of the storm by the hurricane center happens to be only 155 miles away from the actual position of the storm. A remarkable leap in the warning time to residents in the path of these powerful storms. It’s a massive positive change, and a major part of it can be attributed to improved supercomputers for weather forecasting.

Supercomputers – How the Predictions Are Made

The National Hurricane Center forecasts storms using three different models. One model is a US devised model specifically made for hurricane tracking. Another is a global model called the ECMFW’s European model. And finally, the Global Forecasting Model, which is based in the US. All three models are used to compile and crunch data which is then transferred to a consensus model that extracts data from all three models and averages them. This data is then passed on to agencies and ultimately the public.

According to all the data compiled regarding hurricanes up until now, the European model has proven to be the most effective. Although it saw slight ups and downs in performance during the historic 2017 hurricane season in the Atlantic, it has been the most consistent basic model for hurricane tracking over the last three years. Its results happen to be better than all other global forecasting models. Only HCCA, a weighted consensus model, has given better results. And HCCA is based on the European model as well.

Supercomputers – The Importance of Forecasting

Forecasting matters a great deal, especially when it comes to hurricanes. Most people living within these coastal, flood-prone regions are unaware of how much devastation a hurricane can truly cause until it affects them directly. An accurate forecast multiple days in advance can help communities prepare for a hurricane and allows relief operations to ensure that they are ready after the storm hits. It is safe to say that an accurate forecast can end up saving hundreds, if not thousands, of lives. Recent history has shown how a single error in judgment of hurricane tracking can have dire consequences.

In 1998, Hurricane Mitch was making its way across the Atlantic and towards Central America. At the time the National Hurricane Center’s forecasting happened to be very experimental and was not considered a reliable source. As a result, they rarely released the information they gathered and would hesitate to reveal their hurricane forecasts ahead of time. As Mitch came roaring across the Atlantic Ocean, a forecast was released by the National Hurricane Center. According to the forecast, the hurricane would move northward at a relatively measured pace. However, the hurricane ended up moving westward and struck Central American at full force, with Honduras and Nicaragua catching the worst of it.

The forecast made at that point in time was based on the technology available. More accurate forecasting technology could have aided in saving 11,000 people’s lives. Mitch was the most devastating hurricane seen in the Atlantic in the last two centuries. This kind of tragedy has a smaller chance of happening today thanks to advancements in supercomputers.

Supercomputers – The Hidden Benefit of Advanced Forecasting

One incredible benefit rarely discussed during talks about devastation, loss of life and damages to homes and businesses is the cost of evacuation itself. Not only is it a tremendous cost to stage local and federal agencies to ensure an evacuation goes smoothly but also the economic loss on the workers essentially being “away from their post” for an extended period of time.

Moody’s Analytics put the estimate this week at between $45 billion and $65 billion in damages to homes, businesses and public infrastructure, according to chief economist Mark Zandi.

“I’m sure that’s going to go higher, but that’s our current estimate,” he said, adding that this figure does not include up to an additional $10 billion in lost economic output. And therein lies the hidden cost or rather benefit of being able to track and estimate the trajectory of these storms.

As technology continues to improve at a rapid rate, so too will hurricane forecasting. We should all be excited about the possibilities that lie in front of us, as supercomputers will have the chance to save more lives and prepare our communities for storms, no matter how big or small, better than ever before.